Posts by skgiven

|

1)

(Message 2269)

Posted 30 Dec 2013 by skgiven Post: GTX 780Ti ~2250 s GTX770 3,616s GTX670 4,980s Win7 x64, 8GB DDR2133, Intel(R) Xeon(R) CPU E3-1265L V2 @ 2.50GHz (8threads) 28124806 11741759 5194 29 Dec 2013, 22:10:28 UTC 30 Dec 2013, 1:16:06 UTC Completed, waiting for validation 3,615.51 35.62 pending Period Search Application v100.00 (cuda55) 28038972 11728673 5194 29 Dec 2013, 18:46:25 UTC 29 Dec 2013, 21:48:12 UTC Completed and validated 4,980.47 41.03 480.00 Period Search Application v100.00 (cuda55) MSI Afterburner (RivaTuner): GPU power, 56% and 62% GPU temperature, 63C and 47C GPU usage, 98% and 97% Core clock, 1163MHz and 1110MHz GDDR clock, 3506MHz and 3005MHz GDDR usage, 621MHz and 457MB Regarding AVX vs SSE3, on an i73770K there seems to be from 3% to about 15% difference in run times. I expect you would need an ix-4xxx CPU to benefit fully from AVX code developments: 28194832 11760270 53841 30 Dec 2013, 0:47:58 UTC 30 Dec 2013, 4:06:53 UTC Completed and validated 8,747.55 8,665.78 480.00 Period Search Application v102.10 (sse3) 28194788 11755863 53841 30 Dec 2013, 0:47:58 UTC 30 Dec 2013, 4:06:53 UTC Completed, waiting for validation 7,855.23 7,774.79 pending Period Search Application v102.10 (sse3) 28136775 11746774 53841 29 Dec 2013, 22:36:14 UTC 30 Dec 2013, 2:58:03 UTC Completed and validated 7,588.37 7,517.23 480.00 Period Search Application v102.10 (avx) If the GPU and CPU WU's are the same size, just different apps, then a GTX770 may be twice as fast as a single i7 CPU thread, but only has 1/4 the performance of the entire CPU. This seems to be the case because they get the same credit (480). |

|

2)

(Message 647)

Posted 15 Jan 2013 by skgiven Post:

The newer Bulldozers are slightly better than their predecessors, but I think we are talking about a 15% improvement (ballpark). This is positive but something you would expect with maturation. I expect AMD will keep improving and narrow the mid-high end CPU performance gap. It's not like Intel have been bothered with high end CPU's for almost 3 years (there hasn't been a significant improvement since the i7-980x). Perhaps 22nm refreshes will actually bring some benefits, but Intel's step down from 32nm to 22nm didn't produce any clock for clock improvement for crunchers. Even the 'reduced' power isn't that great. 18W sounds good but when you overclock it declines and vanishes around 4.5GHz. When you consider the overall systems power reduction, 18W from a 450W system is 4% - it would take years to pay for itself at normal clocks, and who buys a K or X model without OC'ing? AMD's market is still primarily in low to mid range desktop business models and laptops where their CPU-integrated GPU's deliver excellent performance over Intel's wanton efforts. Your calculations match mine, but at the lower end of the market AMD systems stay better; around 2.5 to 3years - which is approaching their life-expectancy anyway. With lighter use I would extend that past 3years. For a long time I hung in with AMD CPU's, upgrading and selling the older CPU's on after 6 to 12 months. This was a convenient approach for me, as I liked to have reasonably high end CPU's, but wasn't keen on the price tags of the flagship Intel models (and I'm still not). When AMD started to lose significant performance ground, and perhaps more importantly the power requirements of the Intel models dropped I moved to reasonably high end Intel models. For anyone crunching on GPUs, it makes sense to not be pushing out another 30 to 50W of heat into the case. So forking out for a power efficient PSU and CPU makes sense. I think it's always best to choose your project(s) and build accordingly. On some projects AMD's perform very well, while on others they struggle. It's not as clear as AMD vs NVidia and Intel's probably perform better for most project, but I think it's worth looking into before taking the plunge on a new CPU/system. Generally an 8/12thread Intel CPU will outperform a high end AMD by ~50% but if you're not prepared to fork out for a high end Intel then the AMD's are competitive and worth considering, on a project basis. |

|

3)

(Message 495)

Posted 20 Dec 2012 by skgiven Post: With more results (of the non-skewed variety) I can now say the Windows app is somewhere between 8 and 18% faster than running on Linux from within a VM. Some of my results were skewed by an mt app that caused more tasks to run than there were threads! So ignoring those, there seems to be quite a bit of variation in individual task performance, and it's likely that other tasks impact upon these to a few percent. Again, its probable that a native installation of Linux would outperform the VM for performance, reducing the real difference between Linux and Windows. I might test this theory at some stage. I'm still seeing no significant performance difference between an i7-3770K@4.2GHz and an i7-2600K@4.2GHz (+/-1%). Disappointing, but no surprise as they are basically the same processors. The notable exceptions when it comes to CPU computing are: The 3rd generation processors have a 0.1GHz clock increase over the i7-2600K, but the i7-2700K matches this anyway and this is irrelevant as soon as you start to OC, which is the whole point of getting the K model. The 22nm lithography, which coupled with a 77W TDP should offer reduced power consumption (though it's not a lot), but this is only better up to ~4.5GHz, after which it's worse. 25.6GB/s Max Memory Bandwidth, rather than 21GB/s is good for a few projects that benefit from higher memory bandwidth. Maybe 2 or 3 projects, by a few percent. There are greater potential benefits to do with the GPU side of crunching: The Intel® HD Graphics 4000, rather than the 3000, could in theory be used by a project to run OpenCl apps. I’ve been half expecting the emergence of a project to do just that, if not one that can span multiple resources (GPGPU/CPU). The 3770K can support PCIE3.0 and it brings the controller onto the CPU, but this really only benefits people with PCIE3 capable GPU’s and arguably only those wishing to add a second GPU. Even then the benefits are disputable, probably quite small and limited to a very few projects; apps that continuously send over the PCIE are notoriously slow anyway. Fast apps simply avoid PCIE usage as much as possible. If you want a new system to support two high end GPU’s then the 3770K or the i5-3570K would make sense. For here, the i7-2600K/2700K/3770K and probably the 3820’s are all roughly on par. They do about 50% more work than an i5-2500K (and similar), and AMD’s 8-Core FX-8320 just about surpasses the i5-2500K (~8%). i5-2500K (4 real cores, no HT) - Run Time 4,805.70, CPU Time 4,706.43, Credit 120.00 8-Core FX-8320 - Run Time 8,900.27, CPU Time 8,895.40, Credit 120.00 Even if the AMD 8-Core processors are relatively better when it comes to Multi-threaded applications such as SimOne@home (possibly the only existing mt Boinc project), I could only see it pulling slightly away from the i5’s and not eating enough into the i7’s lead to make it a good choice for crunching. Some crunchers have reported that AMD’s 6core predecessors actually outperform their 8 core processors, on some projects, and that's pitting 6 old cores against 8 new cores. |

|

4)

(Message 492)

Posted 19 Dec 2012 by skgiven Post: On average I have windows7x64 being 23% faster than Dotschux in a VM (on the same system). A non-virtual machine might be slightly better? Some people might also be interested in noting that an i7-2600K @4.2GHz is just as fast as an i7-3770K @4.2GHz. I've noticed this on several other projects too, and haven't seen a project on which the i7-3770K is faster. |

|

5)

(Message 471)

Posted 15 Dec 2012 by skgiven Post: Well done  Tasks seem to take about the same length of time on Windows as Linux (a good thing). If anything Windows might be slightly faster (haven't run enough to know for sure, and some seem slightly faster than others; there is variation). I can still use the VM's for sim1... |

|

6)

(Message 397)

Posted 27 Nov 2012 by skgiven Post: Yes, you can add any project you like. |

|

7)

(Message 393)

Posted 22 Nov 2012 by skgiven Post: I found it really to setup. Just read the thread, follow the instructions and you can have one of those coffee bean asteroid badges for Xmas. |

|

8)

Message boards :

News :

Badges

Posted 21 Nov 2012 by skgiven Post: Yes, you need to upload an image. I just saved one from the site to my c:\ drive and then uploaded it. If you want more you would need to make one image with your different research badges and upload that. |

|

9)

(Message 387)

Posted 20 Nov 2012 by skgiven Post: Your rig is on page 2, ranked 33rd: http://asteroidsathome.net/boinc/top_hosts.php?sort_by=expavg_credit&offset=20 ID: 3487 Details | Tasks Cross-project stats: BOINCstats.com Free-DC 33 Tex1954 1,457.25 20,280 6.12.33 AuthenticAMD AMD Phenom(tm) II X6 1090T Processor [Family 16 Model 10 Stepping 0] (6 processors) [2] NVIDIA GeForce 9800 GT (511MB) Linux 3.0.0 |

|

10)

Message boards :

News :

Badges

Posted 13 Nov 2012 by skgiven Post: I guess a coffee bean Asteroid will turn up on the tail of my statseb.fr commit sig soon, |

|

11)

(Message 378)

Posted 10 Nov 2012 by skgiven Post: On Windows BOINC must be installed using the default installation settings which means that in the third (maybe fourth?) screen in the installer the user must not click Advanced button and select Protected Application Execution. If BOINC is already installed with Protected Application Execution option selected then the user must reinstall BOINC and make sure that option is not selected. It's actually very easy. PAE/Daemon isn't good for several projects already. WCG still use their own version of Boinc where PAE is enabled by default (security reasons). If PAE is in use you will have to uninstall Boinc and then reinstall it; you can't just reinstall and unselect it because it's greyed out. |

|

12)

(Message 377)

Posted 10 Nov 2012 by skgiven Post: It's been known for years that Boinc benchmarks vary across different operating systems. Remember that it's just a benchmark, and doesn't necessarily reflect performance. Go by the return times of like for like tasks, not the benchmark. Unfortunately some credit systems are based on the benchmark but ultimately its about returning completed work. Benchmarks can fluctuate from processor to processor and usually vary between readings on the same machine. If your reading is around half what you would expect then perhaps the CPU has downclocked, 3.4GHz to 1.6GHz for example. If you are crunching within a VM and on Windows, the Windows bench if taken without the VM running should be higher; the core freq. of higher end CPU's drops in steps of 100MHz when more cores are used. So a stock i7-2600K @3.4GHz with turbo on would only run one or two threads at 3.8GHz, if running 4 threads each will drop by two hundred MHz and it would be 3.4GHz if 8threads were being used. The benchmark is for one core/thread but all based on Boinc CPU % use setting. Then there is saturation; the CPU struggles to feed 8threads due to contention. For some projects its been demonstrated that using 7threads is more productive than 8. The benchmark couldn't reflect that as its a generic bench and not specific to the project - which begs the question why don't projects have their own benchmark? This would be more accurate as far as the project is concerned and would help some people decide what system to get/use? |

|

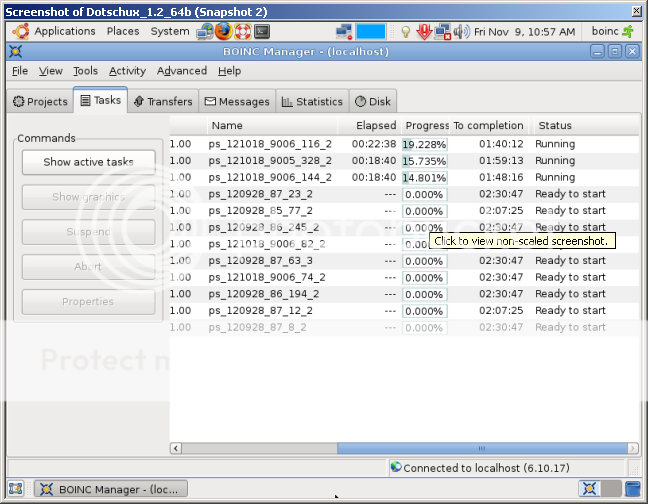

13)

(Message 376)

Posted 9 Nov 2012 by skgiven Post: Decided to give this a go and have some fun with Dotsch again :) Nice set of instructions jujube! I installed your method & got it to work, several Problems though. It would only run 1 Wu @ a time & I couldn't see the option in BOINC to possible re-set to 4 CPU's, shouldn't really need to do that though. It's on a Laptop & apparently the screen isn't large enough to see the whole Options/Preference Box. When setting this up I selected 3 CPU's, and 1GB but you could select 2 or 4 CPU's,  After attaching to http://asteroidsathome.net/boinc/ it started to run 1 task and had one in the queue. Boinc Messages said, 'Number of CPU's: 1'. I opened the Advanced Preferences (Computer Preferences) and set the CPUs to 100%. Boinc did another benchmark and saw 3 CPU's. It's (i7-2600K @4.2GHz) now running 3 tasks with 9 in the queue. Looks like ~2h/task. I had to click on the Sunday box (twice, to uncheck it) then Tab into the % processors box where I just typed 100 and hit enter. Not sure if this was necessary as when I later changed the font sizes so I could see all of Boincs Preference boxes, after a reset it stayed at 100%.   Good luck, |